Here’s how the Safebots architecture is already able to channel it for good…

Last week, Meta’s FAIR lab released TRIBE v2 — a foundation model trained on 500+ hours of fMRI brain scans from 700+ people. It can take any image, video, audio clip, or text passage and predict, at the level of 70,000 individual brain voxels, how a human brain will respond to it.

This isn’t merely sentiment analysis. This isn’t “will people click on this.” This is a model that predicts which specific brain regions activate — the reward circuitry, the threat response, the language network, the parts responsible for social bonding, memory encoding, and self-referential thought.

It’s open source. The weights are free to download. Anyone can run it today.

That’s worth sitting with for a moment.

The dual-use problem, stated plainly

Give someone a tool that predicts how a brain responds to content, and the optimization loop writes itself: generate a variant, score it, modify, repeat. Do this a million times without any human in the loop, and you can systematically converge on content that hijacks whatever neural target you choose — outrage, craving, tribal fear, compulsive engagement.

This is not hypothetical. Social media platforms have been doing cruder versions of this for years using behavioral signals (clicks, watch time, shares). TRIBE v2 provides a neural ground truth signal instead of a proxy, and removes the need for real users in the iteration loop entirely.

The question isn’t whether this capability will be used. It will. The question is whether it can be used for something other than maximizing addiction and division.

We think it can — because we’ve already been building the infrastructure to do exactly that.

What we built before we knew this model existed

A few weeks ago we wrote about building cultural infrastructure with AI — specifically a holiday greeting system that shows people celebrations from cultures unlike their own, in their own language, surfacing moments of connection across religious and cultural lines.

The architecture we described there turns out to be exactly the right container for a model like TRIBE v2:

- AI generates content offline, not in real-time response to users

- Everything passes through a structured validation and scoring pipeline

- Unsafe or low-quality content is rejected before it enters the database

- High-scoring content accumulates as reusable assets, indexed by structured attributes

- Rules and scoring decide what gets shown, not unconstrained AI

We built that system thinking about semantic quality — is this image appropriate, is this greeting well-expressed, does it respect the culture it depicts. What we didn’t know at the time is that the same architecture naturally contains neural-level scoring too.

TRIBE v2 as just another scorer

The key insight is that TRIBE v2 doesn’t need to change our architecture at all. It slots in as an additional capability alongside our existing vision LLM scorer. Both run offline. Both produce structured scores. Both feed into the same policy gate that decides whether a piece of content enters our library.

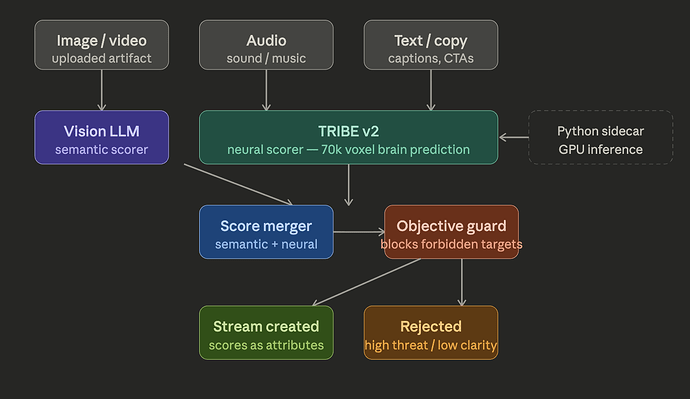

The diagram above shows the full pipeline. What’s new is the teal box — TRIBE v2 running as a Python sidecar (GPU inference, called over a local socket), with the resulting 70,000-voxel prediction mapped through a neuroscience atlas to produce named scores:

Scores we optimize toward:

engagement— prefrontal and parietal activation, attention and processingcomprehension— language network coherence, narrative clarityreward_clean— reward circuitry activation without co-activation of threat responseself_relevance— default mode network, the “this matters to me” signalmemory_encoding— hippocampal-adjacent activation, whether something will be remembered

Scores we monitor but never optimize toward:

threat_response— amygdala activation, fear and angerreward_threat_ratio— the “outrage score”: reward activation driven by threat rather than comprehension

That last distinction is the whole game. Outrage-optimized content scores high on reward activation — it’s genuinely “engaging” in the narrow sense. But it achieves that by co-activating threat response. The ratio exposes this pattern, and our policy gate blocks any content that exhibits it, regardless of how well it scores on other dimensions.

The guardrail is structural, not a policy document

This is the part that matters architecturally. Most “responsible AI” implementations work by telling a model to behave well, or by having humans review outputs, or by publishing usage policies. Those approaches don’t scale and they don’t compose.

Our guardrail is different: the objective function that the optimization loop can target is structurally constrained. The threat_response and reward_threat_ratio scores are stored as stream attributes (visible, auditable) but are not available as optimization targets. The code that runs content selection can only maximize within the permitted space. There is no configuration option to change this — changing it requires modifying the workflow definition, which is hashed and auditable.

This means we can prove, not just claim, that no content in our library was optimized for outrage. The execution trace is in the stream graph. The policy is enforced at the architecture level, not the intent level.

What this enables for community builders

For anyone using the Safebox platform to run a community or publish content, this translates to a concrete capability: before any piece of content — image, video, audio, copy — is approved for publication or distribution, it can be automatically scored against both semantic quality criteria (is this appropriate, well-expressed, culturally respectful) and neural impact criteria (does this promote comprehension and genuine engagement, or does it exploit threat response).

This can run as an automatic step in the content approval workflow. High-scoring content on both axes gets a green light. Content that scores well on engagement but high on threat response gets flagged for review. Content that fails the policy gate is rejected before it ever reaches community members.

Over time, the scores accumulate as attributes on content streams. This means your content library becomes searchable and filterable by neural quality, not just semantic tags. The pieces that score highest on comprehension + self_relevance + reward_clean naturally surface for reuse and resharing. The flywheel runs in the direction of content that makes people feel connected and understood, not afraid.

The bigger picture

TRIBE v2 will be used to manipulate people. That’s already happening with cruder tools, and this one is better. The release of the weights openly means there’s no meaningful barrier to anyone doing this.

The answer isn’t to avoid the technology. It’s to build systems where the technology’s optimization target is declared, constrained, and auditable — and where the default target is something worth optimizing for.

We chose bridging over division: content that activates self-relevance and comprehension together, across cultural lines, without activating threat. That’s what the holiday system was always trying to do. We just didn’t have the neural vocabulary to describe it until now.

The infrastructure was right. The model is new. They fit together.

Safebox is an open-source platform for building AI-powered community tools with structural safety guarantees. Read more at safebots.ai.

TRIBE v2 paper and weights: ai.meta.com

Original Safebots architecture post: